AI Skills Academy

AI tools are reshaping how we work, learn, and create. When we asked ourselves how to democratize AI upskilling and literacy across the University community, we landed on a simple premise: meet learners where they are, on their schedules.

From December 10, 2025 to January 10, 2026, UVA Online Education and Digital Innovation (OEDI) partnered with leading skills assessment platform CodeSignal to deliver our first-ever AI Skills Academy. Through no-cost, asynchronous AI upskilling, 50 UVA students and 75 faculty and staff increased AI literacy and confidence across several core competencies.

Program Structure & Delivery

Over a 40-day access period, 175 participants engaged in fully asynchronous, self-paced AI literacy upskilling coursework. Based on experience level, participants chose between two learning tracks:

As a supplement to virtual coursework, participants were invited to participate in several synchronous opportunities to share insights and troubleshoot concerns. These included:

- An optional kickoff webinar on December 3, 2025

- Pre- and post-learning surveys to measure growth and identify areas of interest

- Weekly virtual office hours

- In-person retrospectives at our office to share real-time feedback and compare perspectives

This structure ensured that participants could learn on their own time while having a breadth of resources and engagement opportunities at their disposal.

Engagement Data

User Activity Comparison

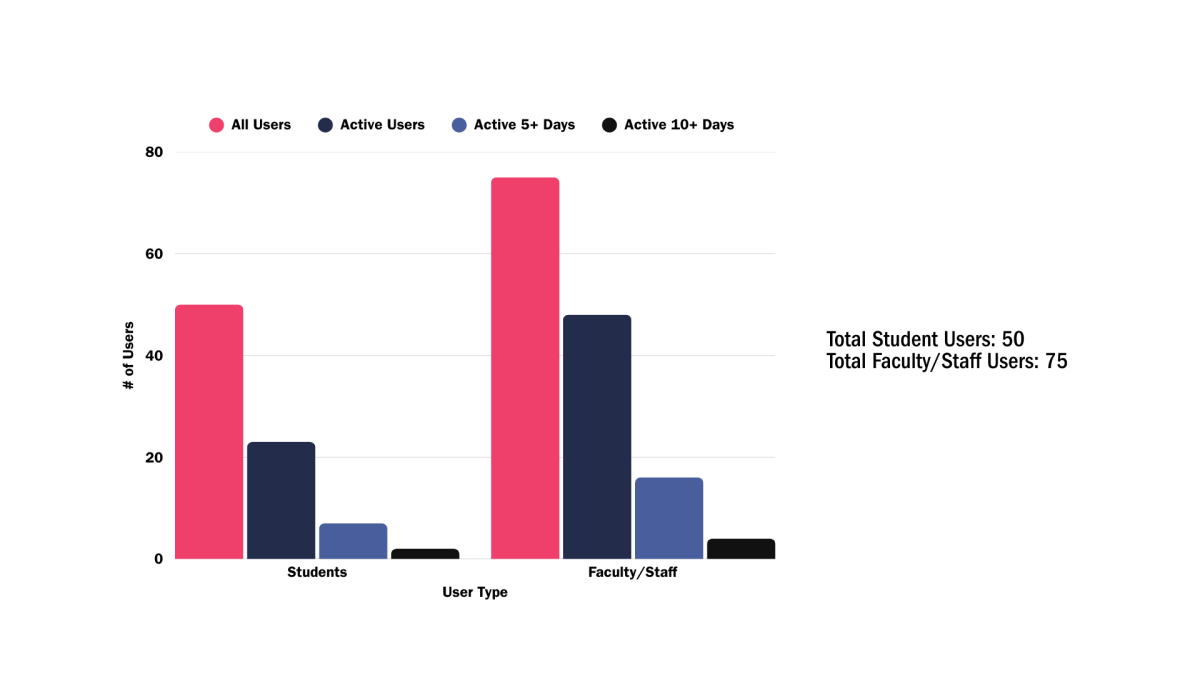

Among all participants, 64% of faculty/staff and 46% of students were active users. "Active" users are defined as those who logged into CodeSignal and completed at least one practice exercise.

Depth of User Engagement

Faculty/staff users spent more days on average learning on the platform, signaling a more sustained engagement with CodeSignal’s content and commitment to learning beyond surface-level exploration.

Metric |

% of Student Users |

% of Faculty/Staff Users |

|---|---|---|

|

Active 5+ days (% of all users) |

14% |

21% |

|

Active 10+ days (% of all users) |

4% |

11% |

|

Average active days |

3.6 |

6.9 |

Practice Completion Intensity

Higher rates of platform engagement aligned with practice exercise completion and signaled more consistent learning among the faculty/staff cohort.

Milestone |

Student Users |

Faculty/Staff |

|---|---|---|

|

25+ Practices Completed |

28% (14/50) |

42.7% (32/75) |

|

50+ Practices Completed |

18% (9/50) |

28% (21/75) |

|

100+ Practices |

6% (3/50) |

5.3% (4/75) |

The "Super User" Phenomenon

While practice completion varied between student and faculty/staff cohorts, both produced a similar number of "super users"--defined as users who completed at least 100 practice exercises over the program period.

Key Insights

Confidence Gains Across the Board

Participants reported notable confidence gains in key AI skills over the 40-day period, with the strongest growth emerging in the following core competencies:

- Prompting skills: +25% confidence gain (3.41 → 4.25/5)

- Personal applications of AI: +28% confidence gain (2.92 → 3.75/5)

- Awareness of AI ethics & limitations: +20% confidence gain (3.37 → 4.05/5)

Break Timing Works

While it can be tempting to believe that faculty, staff, and students generally dislike learning outside of scheduled academic term, learner feedback overwhelmingly favored delivery over scheduled academic and professional breaks.

Professional Audiences are Ready

The 64% faculty/staff participation rate, along with sustained engagement during outreach and wrap-up phases, signals strong demand for AI upskilling among professional audiences.

Platform Feedback

While CodeSignal effectively built foundational skills, learners identified opportunities for improvement. Some learners preferred a more regimented and less gamified format, while others found the first track too easy and the second too difficult.

Learner Feedback

"I didn't realize prompting was such a useful skill...it has saved me time when I need the LLM to respond accurately the first try."

- Second-year student

"I see more use cases for AI than I did previously, especially knowing how to better set up the context and provide constraints."

- UVA staff member

"I plan to use the skills I've learned to create better prompts and create more refined responses."

- Third-year student

"I found [CodeSignal] enjoyable and a little bit addictive in the best way. I liked doing this over break."

- UVA faculty member

What's Next

The AI Skills Academy demonstrated that accessible, well-timed AI literacy programming can drive meaningful engagement and confidence growth across diverse university audiences. We seek to build on these insights as we scale professional development opportunities, both for UVA students and UVA faculty and staff.

Have questions about the program? Interested in participating in future learning pilots?

Share your thoughts with us by filling out this form or contacting oedi@virginia.edu.